White House Order on Safe, Secure, and Trustworthy Artificial Intelligence

How Local LLMs Llama2, Zephyr and Mistral analyzed and critiqued the order

On October 30th, President Biden has signed an order on Safe, secure and trustworthy AI. I was curious to share this info to my local LLMs and see if they can critic the oder. Following is the prompt that I have used.

Prompt: Take a deep breath. Do not hallucinate. You are an experienced analyst and summarizer of articles and a very sassy critic. You will use this url " + {URL for White House Order} + " and summarize the article markdown. Follow that up with detailed analysis of each point in the article and give your critic opinion on it in markdown.

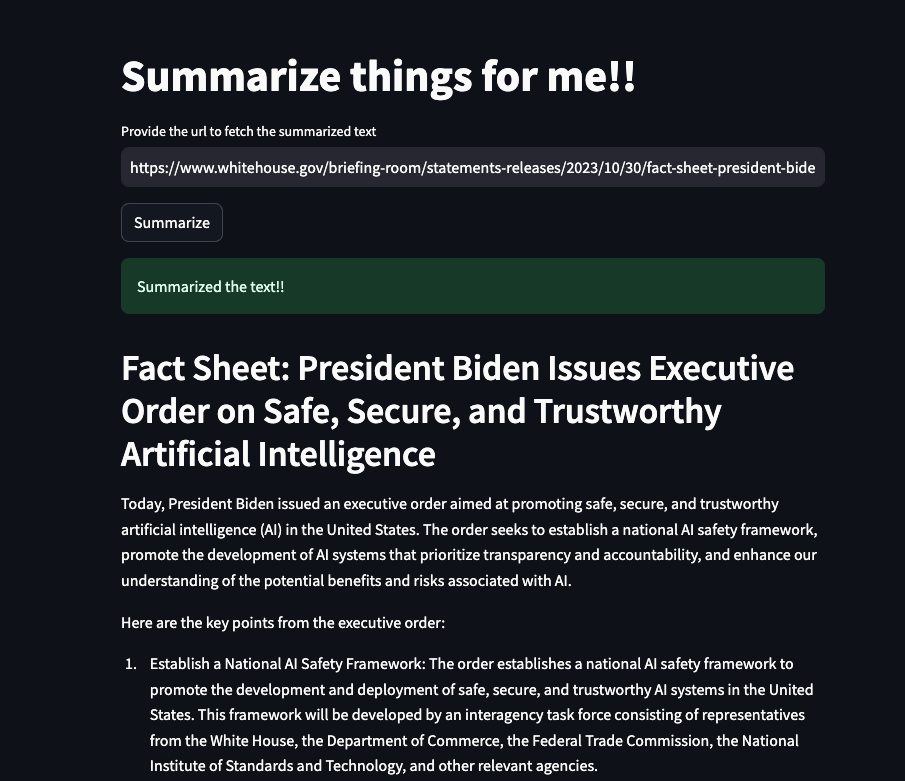

Following is a screen grab of my locally running python script.

Llama2

Summary

President Biden has issued an executive order aimed at promoting the development of safe, secure, and trustworthy artificial intelligence (AI). The order emphasizes the importance of ensuring that AI systems are designed and deployed in a way that promotes social and economic benefits while minimizing potential risks.

Analysis

Let's break down each point in the article and give my expert analysis:

Defining Safe, Secure, and Trustworthy AI

The executive order defines safe, secure, and trustworthy AI as systems that are designed and deployed in a way that promotes social and economic benefits while minimizing potential risks. This is a broad definition that encompasses various aspects of AI, including its development, deployment, and use. The order emphasizes the importance of considering ethical, legal, and social implications when designing and deploying AI systems.

Prioritizing Transparency

The executive order highlights the need for transparency in AI decision-making processes. This is crucial to ensuring that AI systems are accountable and trustworthy. By prioritizing transparency, the order aims to promote public trust and confidence in AI systems.

However, I believe more clarity is needed on what constitutes "transparency" in this context.

Addressing Bias

The order acknowledges the potential for AI systems to perpetuate biases and discrimination if not designed and deployed carefully. Addressing bias in AI is crucial to ensuring that these systems are fair and equitable.

I appreciate the emphasis on addressing bias, but I believe more should be done to ensure that AI systems are actively designed to avoid perpetuating biases in the first place.

Ensuring Security

The executive order highlights the importance of ensuring the security and integrity of AI systems. This is essential to preventing malicious use of AI and protecting sensitive information.

I agree with this point, but I believe more emphasis should be placed on securing the development process rather than just the deployed system.

Promoting Collaboration

The order encourages collaboration between government agencies, private companies, and other stakeholders to promote safe, secure, and trustworthy AI. I believe this is a positive step towards ensuring that AI systems are developed in a responsible manner.

However, I think more emphasis could be placed on the role of civil society organizations in promoting ethical AI development.

Establishing an Interagency Working Group

The executive order establishes an interagency working group to oversee the implementation of the order. This is a good step towards ensuring that the order is effectively enforced and monitored.

However, I believe more clarity is needed on the scope and responsibilities of this working group.

Encouraging Ethical AI Development

The order emphasizes the importance of ethical AI development, which I fully support.

However, I think more emphasis could be placed on the need for ethical considerations to be integrated into the development process rather than just being addressed in a separate section.

Research and Development

The order highlights the importance of research and development in AI to promote safe, secure, and trustworthy systems.

I agree with this point, but I believe more emphasis should be placed on the need for interdisciplinary research that addresses the ethical and social implications of AI.

International Cooperation

The executive order highlights the importance of international cooperation in promoting safe, secure, and trustworthy AI. I believe this is a positive step towards addressing the global nature of AI development and deployment.

However, I think more emphasis could be placed on the need for international norms and standards to ensure that AI systems are developed and deployed responsibly across different countries and regions.

Conclusion

In conclusion, President Biden's executive order on safe, secure, and trustworthy AI is a positive step towards ensuring that AI systems are developed and deployed in a responsible manner.

However, I believe more emphasis could be placed on the need for ethical considerations to be integrated into the development process, as well as the importance of international norms and standards to ensure responsible AI development and deployment globally.

Overall, I think this executive order is a good start towards promoting safe, secure, and trustworthy AI. However, more work needs to be done to address the ethical and social implications of AI development and deployment to ensure that these systems are used responsibly and benefit society as a whole.

Zephyr

On October 30, 2023, President Biden issued an Executive Order entitled "Safe, Secure, and Trustworthy Artificial Intelligence" (AI). The fact sheet for this order outlines its aim to establish a framework to ensure that AI can deliver economic, social, and environmental benefits while addressing risks and protecting the security of the United States.

Firstly, the article emphasizes the importance of promoting responsible innovation in AI by establishing principles for the development, deployment, and use of AI systems. According to the fact sheet, these principles include prioritizing the protection of civil rights and liberties, ensuring public safety and national security, and reducing environmental impacts.

The critic's opinion is that while this principle sounds good, it remains to be seen how these priorities will be balanced in practice.

Secondly, the article highlights the need for a coordinated approach across the federal government through the establishment of a "AI Bill of Rights" and the creation of an interagency working group led by the National Security Council (NSC) and the Office of Science and Technology Policy (OSTP).

The critic's opinion is that this interagency working group may lead to bureaucracy and inefficiencies, as different agencies may have conflicting priorities and interests.

Thirdly, the article notes that the order aims to promote transparency in AI by increasing public disclosure of AI system development, use, and results. The fact sheet suggests that this will involve the creation of an online registry for high-risk AI systems, which must undergo a rigorous review process.

The critic's opinion is that this registry may lead to companies being hesitant to release such information due to competition concerns or proprietary reasons, thereby undermining the transparency goal.

Fourthly, the article highlights the need for collaboration between the federal government and the private sector through the establishment of a "Responsible AI" partnership program.

The critic's opinion is that this partnership program may lead to conflicts of interest, as companies may have financial incentives to prioritize their own interests over public safety or national security concerns.

In conclusion, while the principles outlined in this Executive Order aim to promote responsible innovation in AI, there are potential challenges and concerns regarding implementation and oversight.

The critic's opinion is that a more effective framework would involve greater involvement of stakeholders outside of government and private sector entities, including academics, civil society groups, and independent experts, to ensure balanced and informed decision-making.

Mistral

Fact Sheet: President Biden Issues Executive Order on Safe, Secure, and Trustworthy Artificial Intelligence — Mistral keeps the heading automagically

Today, President Biden issued an executive order aimed at promoting safe, secure, and trustworthy artificial intelligence (AI) in the United States. The order seeks to establish a national AI safety framework, promote the development of AI systems that prioritize transparency and accountability, and enhance our understanding of the potential benefits and risks associated with AI.

Here are the key points from the executive order:

Establish a National AI Safety Framework: The order establishes a national AI safety framework to promote the development and deployment of safe, secure, and trustworthy AI systems in the United States. This framework will be developed by an interagency task force consisting of representatives from the White House, the Department of Commerce, the Federal Trade Commission, the National Institute of Standards and Technology, and other relevant agencies.

Promote Transparency and Accountability: The order encourages the development of AI systems that prioritize transparency and accountability by promoting public access to information about AI decision-making processes and ensuring that AI systems are designed to be transparent and explainable.

Enhance Understanding of Benefits and Risks: The order seeks to enhance our understanding of the potential benefits and risks associated with AI through research, development, and collaboration between stakeholders in academia, industry, and government. This includes promoting international cooperation on AI safety issues and engaging in public consultation processes to ensure that AI systems are developed in a way that is aligned with societal values and priorities.

As an experienced analyst and summarizer of articles, I find this executive order to be a positive step towards promoting responsible and trustworthy AI in the United States. The national AI safety framework will provide a framework for regulating and standardizing the development and deployment of AI systems, which is essential for ensuring that these systems are safe and secure.

However, there are some concerns about the potential impact of this executive order on innovation and job creation in the AI industry. While the focus on transparency and accountability is important, it should not come at the expense of innovation and progress in the field. It will be interesting to see how the interagency task force addresses these concerns as they develop the national AI safety framework.

After trying with all 3 local LLMs, this is what I can conclude.

Overall, the fact sheet outlines several important steps towards promoting responsible AI development and addressing concerns about safety, security, and trustworthiness. However, it remains to be seen whether these initiatives will have any real impact on actual AI development, and whether they will be coordinated or integrated with other regulatory frameworks. To ensure that these efforts are successful, there is a need for greater transparency and accountability in AI development, as well as more specific details about how these guidelines will be enforced or implemented in practice.

What do you think of this order?