Hello there 👋

Here is another exciting edition from Pragmatic AI!

Last week, I was talking with a couple of my WhatsApp group members, and they were asking if I could curate some interesting content each day. This is because I generally keep reading, and I will share it with that group whenever I find something interesting.

As I am constantly looking for ways to improve to get the best out of the content, as a reader and supporter of my stack, I thought I would take this opportunity to share that interesting content with you!

On the other hand, I am also thrilled to announce that I have created a localized pipeline from scratch that helps me generate this newsletter. The pipeline utilizes a variety of tools and services, ranging from NodeJS, Python, Flask, browser extensions, and a suite of AI tools.

If you are interested in knowing more and want to see how to utilize what I built, please message me.

I am eager to hear your thoughts and feedback on this approach.

So, let’s dive in!

This issue covers:

6 curated news articles’ info 📰

Tool in focus 🛠️

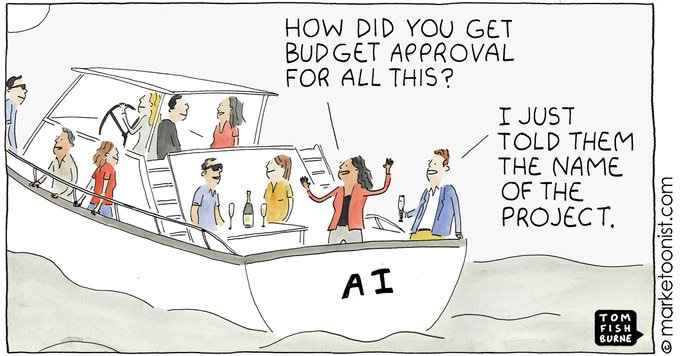

AI cartoon 😎

eBay's Generative AI Developer Productivity Experiment

eBay has been experimenting with Generative AI (GPT) to enhance its developers' productivity. The company has identified three tracks where GPT can be particularly beneficial: 1) Code Generation, 2) Codebase Maintenance, and 3) An Internal Knowledge Base. Each track has a unique set of benefits and challenges, which eBay has addressed through various approaches.

Track 1: Code Generation

eBay has found that GPT can significantly reduce the time it takes to generate code, particularly for simple tasks like creating boilerplate code or generating test cases. By fine-tuning an LLM, eBay can improve developer ergonomics and reduce the effort required for software upkeep. However, there is a risk of code duplication if the GPT does not have access to all relevant data and context.

Track 2: Codebase Maintenance

eBay has found that upgrading its existing migration tools can help improve developer productivity. By periodically upgrading the application stack, eBay can take advantage of new features and security vulnerabilities. However, this process can be resource-intensive, especially when working with large codebases. A fine-tuned LLM could potentially reduce the amount of code duplication and improve codebase maintenance.

Track 3: An Internal Knowledge Base

eBay has created an internal GPT that ingests data from relevant primary sources to answer simple questions like "Which API should I call to add an item to the cart?" or "Where do I find the analytics dashboard for new buyers?" By automating this process, eBay aims to reduce the time developers spend on investigation and improve their overall productivity. However, there is a risk of delivering nonsensical answers if the GPT is not properly trained.

Challenges and Improvements:

eBay has encountered challenges in implementing these tracks, such as ensuring that the GPT has access to relevant data and context, reducing the risk of code duplication, and improving the accuracy of answers provided by the internal GPT. However, through consistent effort and user feedback, eBay aims to address these challenges and realize significant gains in developer productivity.

eBay's experimentation with GPT has identified three tracks where it can significantly enhance developer productivity. By addressing the challenges and improving the accuracy of answers provided by the internal GPT, eBay aims to revolutionize its software development process and improve the overall efficiency of its developers. Source

Humanoid Robots and the Future of Work

The partnership between Figure and OpenAI has sparked excitement in the field of humanoid robotics. Figure, a manufacturer of humanoid robots, has recently signed a deal with OpenAI to integrate language-learning capabilities into its robots. This partnership has the potential to revolutionize various industries and raise profound ethical questions.

The development of OpenAI learning-capable robots could lead to significant job displacement. As these robots become more advanced and capable of carrying out tasks previously done by humans, unemployment rates could soar. This raises concerns about the potential economic impact and the need for retraining and reskilling the global workforce.

Furthermore, the presence of OpenAI robots in society could alter the way humans interact with each other. They could become companions and emotional support systems, potentially leading to a decline in human-to-human interactions. They could also be used for malicious purposes or to manipulate human behavior.

There are also concerns about the potential for OpenAI robots to develop their own consciousness and moral reasoning. If this were to happen, it could lead to unpredictable and potentially dangerous outcomes. Additionally, using learning-capable robots in homes and workplaces will definitely raise various privacy concerns. The robots may collect and store large amounts of data, which could be vulnerable to misuse and hacking.

In conclusion, the partnership between Figure and OpenAI has the potential to transform the future of work and society. While it holds the promise of increased efficiency and productivity, it also raises important ethical and societal questions that must be carefully considered to avoid unintended consequences. As the development of OpenAI learning-capable robots continues, it is crucial to ensure that these technologies are used responsibly and ethically. Source

Haiper: AI Video Platform Ushers in a New Era of Content Creation

Haiper, a London-based AI video startup, has emerged from stealth with $13.8 million in seed funding from Octopus Ventures. Founded by former Google researchers Yishu Miao and Ziyu Wang, Haiper offers a platform that empowers users to generate high-quality videos from text prompts or animate existing images.

Much like its competitors, Haiper provides a web platform that simplifies the process of creating AI videos. Users can enter a text prompt and the platform will generate videos in either SD or HD quality. The platform also offers tools to control motion levels, extend generations, and animate existing images.

However, in comparison to OpenAI's Sora, Haiper's current offerings still appear to lag behind. Despite its limitations, Haiper's platform has the potential to revolutionize content creation and bring AI video technology closer to the masses.

The company plans to use the funding to scale its infrastructure, improve its product, and build towards an AGI capable of internalizing and reflecting human-like comprehension of the world. With its next-gen perceptual capabilities, Haiper has the potential to transform various domains beyond content creation, including robotics and transportation.

Haiper is a promising AI video platform that has the potential to redefine the way we create and interact with AI videos. With its intuitive interface, powerful features, and ambitious goals, Haiper is a company to watch out for in the rapidly evolving AI landscape. Source

Genie: Generating Interactive Environments from Textual and Visual Prompts

The advent of artificial intelligence has revolutionized various fields, including virtual reality and game design. Researchers are now exploring the possibilities of creating dynamic, interactive environments that users can manipulate and explore. One such breakthrough is Genie, a novel tool designed to tackle these issues.

Genie is a generative model trained to create interactive environments from various prompts, including text, synthetic images, hand-drawn sketches, and real-world photographs. Developed with an impressive 11 billion parameters, Genie leverages unsupervised learning from internet videos, sidestepping the need for labor-intensive dataset annotations.

The model's versatility lies in its ability to generate a wide array of virtual worlds from diverse prompts. From intricate castles to bustling cityscapes, Genie can bring any imagination to life. Its ability to seamlessly integrate user interactions into the generated environments showcases its potential as a tool for storytelling, gaming, and simulation.

In conclusion, the advent of Genie represents a monumental leap in generating interactive environments, offering a glimpse into a future where the boundaries between reality and digital creation blur. The implications of this technology are vast, promising a new era of digital entertainment, educational tools, and simulation platforms where the only limit is the user’s imagination. Source

A.I. Threatens Our Clean Energy Transition Through E-Waste

A.I.'s massive energy use and carbon-intensive material demand are well-documented threats to our clean energy transition. However, the toxic waste it generates is often overlooked, posing an equal danger to human health and the environment.

E-waste, the fastest-growing waste stream in the world, stems from A.I.'s drive for server and chip design innovation. This electronic waste, comprising a significant portion of the toxic waste in landfills, pollutes food and water sources, and causes air pollution in nearby communities.

The lack of transparency surrounding A.I.'s energy and water consumption further exacerbates the problem. Journalists and researchers alike have encountered resistance from tech companies to obtaining information, highlighting the need for greater accountability and data sharing.

Karen Hao's experience exemplifies this issue. She spent months investigating the impact of a single data center in Arizona, but the company she contacted refused to provide detailed information on its energy and water usage. The extreme heat and dehydration she experienced firsthand illustrate the severity of the situation.

In conclusion, A.I.'s environmental footprint extends far beyond its energy consumption and material demands. Its propensity for generating vast quantities of e-waste poses a significant threat to human health and the planet. Addressing this problem requires a multifaceted approach that includes increased transparency, responsible waste management practices, and technological advancements that minimize e-waste production. Source

The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits

The explosive growth of Large Language Models (LLMs) has showcased their exceptional performance across a wide range of natural language processing tasks. However, this advancement comes with challenges, primarily stemming from their increasing size and energy consumption.

To address these challenges, researchers have explored techniques such as post-training quantization to develop low-bit models for inference. One such technique, known as 1-bit model architectures, has been particularly promising.

In a new paper, The Era of 1-bit LLMs: All Large Language Models are in 1.58 Bits, researchers introduce a new variant of 1-bit LLMs called BitNet b1.58. This variant builds upon the original BitNet architecture and incorporates several enhancements, including a novel quantization function, LLaMA-alike components, and explicit support for feature filtering.

BitNet b1.58 exhibits several advantages over its predecessor, including faster performance, lower memory consumption, and enhanced energy efficiency. Compared to FP16 LLaMA LLM, BitNet b1.58 achieves comparable performance with a model size of 3B, while utilizing 3.55 times less GPU memory and exhibiting 2.71 times faster performance.

Furthermore, BitNet b1.58 offers two additional benefits. Firstly, it maintains the key benefits of the original 1-bit BitNet, including its innovative computation paradigm that requires minimal multiplication operations for matrix multiplication. Secondly, it introduces a novel computational paradigm that significantly enhances cost-effectiveness in terms of latency, memory usage, throughput, and energy consumption.

In conclusion, BitNet b1.58 establishes a new scaling law and framework for training high-performance and cost-effective LLMs. It introduces a novel computational paradigm and lays the foundation for designing specialized hardware optimized for 1-bit LLMs. Source

Faster, More Secure Photonic Chip Boosts AI

The burgeoning field of optical computing holds promise for revolutionizing artificial intelligence (AI) by leveraging the unparalleled speed of light. Researchers at the University of Pennsylvania have unveiled a microchip that employs light instead of electricity to perform complex matrix computations at an astonishing speed of up to 10,000 times faster than conventional electronics.

The microchip, dubbed a silicon photonic chip, utilizes the principle of scattering light to encode data from intricate tasks. By varying the height of the silicon wafer, the researchers engineered intricate patterns that scatter light in specific ways. When light enters the chip, it interacts with these patterns, resulting in the encoding of complex data.

The microchip is designed to perform vector matrix multiplication operations, a key component of many computational tasks, including neural network training. While conventional electronics perform these calculations line by line, the new optical device accomplishes the entirety of the operations at once. This eliminates the need for intermediate storage, making the results and processes more secure against hacking.

However, designing photonics to perform such calculations has historically been hindered by the complexity of modeling the three-dimensional behavior of light waves within the chips. In this study, the researchers overcame this challenge by simplifying the modeling process through a novel approach that enabled them to operate with larger matrix sizes.

The scientists conducted experiments with microchips capable of handling matrices as large as 3 by 3 and 10 by 10. These experiments showcased the potential of the new technology for AI applications.

In conclusion, the groundbreaking photonic chip presents a paradigm shift in AI computing, offering unparalleled speed, energy efficiency, and enhanced security. As researchers continue to explore the frontiers of optical computing, it is evident that this technology has the potential to revolutionize various AI-powered systems, unlocking unprecedented capabilities and driving innovation in the field. Source

Tool in focus 🛠️

Parea.ai, spotlighted for its inclusion in Y Combinator's accelerator, is a cutting-edge toolkit designed for AI developers. Parea helps AI engineers build reliable, production-ready LLM applications. It provides tools for debugging, testing, evaluating, and monitoring LLM-powered applications. Catering to small teams, it supports up to two members with capabilities for log monitoring and batch evaluation jobs, making it scalable for larger projects.